Some people are boasting about how quickly they get a paper done with AI. Which provoked the obvious retort: “if you write your paper in 3 days, I’ll AI-review it in 20 minutes — why should I give it more attention than you did?”

Speed is a bad proxy for quality. Not because faster is necessarily worse (sometimes it is). Because “how fast” answers nothing without “fast how”.

The backdrop

Two things give the retort its bite:

ICLR 2026: ~21% of reviews flagged as fully AI-generated. Several caught pasting artifact phrases like “Here is a revised version of your review with improved clarity” directly into the review text. (Pangram Labs)

NeurIPS 2025: ~100 hallucinated citations across 51 accepted papers — vibe citing, in GPTZero’s coinage. (GPTZero)1

Slop is overwhelming the pipeline.

But it’s not slop because it’s AI. It’s slop because it’s slop.

Earned speed vs delegated speed

When I wrote my first review, it took me hours. Now I am much faster. My first paper took years; drafting goes faster now, even on projects where I do not lean on AI. The same holds for reading and rebuttals.

That kind of speed is earned. Fluency and experience make you faster because you don’t need to deliberately assess — you have seen this type of problem before, so you can work from trained intuition.2

Earned speed is a byproduct of expertise. Delegated speed is a substitute for it.

There is a subtler cost. Earned speed is the residue of the work that built it; delegated speed bypasses that work. Every task you outsource is a task that doesn’t build your expertise — the nuance lies in telling the difference between the skills you actually need and the ones you won’t. Fast now, stuck later.

The retort flattens both into one number. Three days of fluent drafting and three days of vibe-writing produce different artifacts.

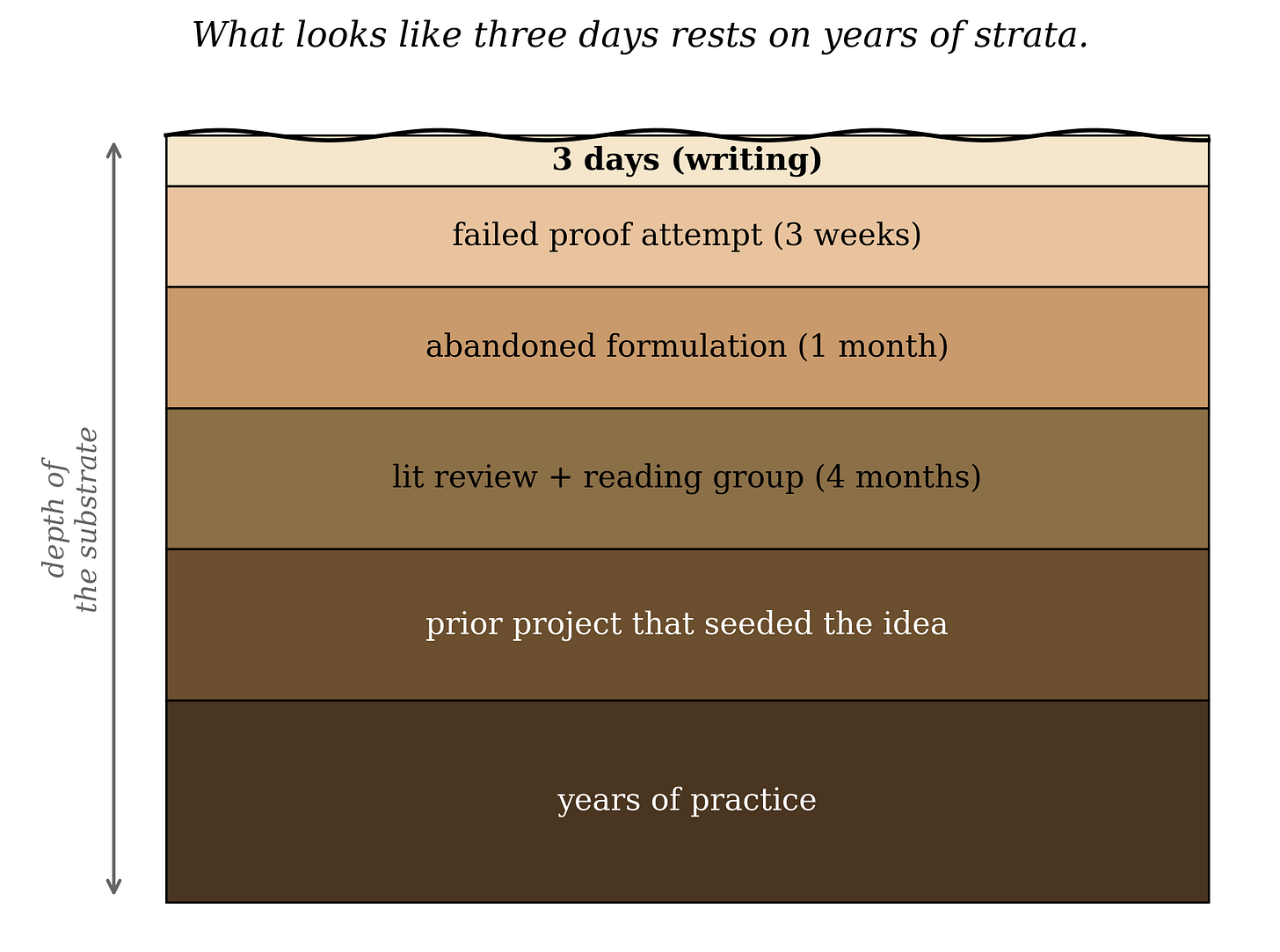

Cal Newport made a related point about thesis writing clinics: people show up assuming the writing is the hard part. It rarely is — the thinking is. If you know what you want to say, the writing can be fast. My mentor’s PhD dissertation was lost due to a hard drive issue and he rewrote it in three days. On the surface, that sounds suspect, but the results had been built up over the years.

Three days was the time-to-articulation, not the time-to-research.

Delegated speed can be trusted speed — with verification (or trust)

We cannot be experts in everything, so we collaborate — and purchase the services of barbers, chefs, and accountants.

Delegated speed is not the root cause of the problem. In some sense, delegated speed was bound to happen to you, even before the proliferation of AI-based tools. As you advance in your career, you will probably become some type of manager. Your projects will get done without you spending much time actually doing the thing: you delegate.

To surface the actual problem with the speed AI brings to the table, we need to dig into the dynamics of delegation.

The hidden assumption is that even though you delegate, there are checks and balances at play: those you delegate to are people you trust, and there is some form of verification in place. Delegating with blind trust can fail.

That’s the unspoken complaint inside the retort: not that AI made it fast, but that nothing in the loop is verifying it. The gap is real, and worth closing.

Go slow to go fast

Navy SEALs have a saying: slow is smooth, smooth is fast. Practiced fluency is speed. That is the affirmative version of earned speed.

The misreading is the strawman: “slow means careful, fast means careless.” A Formula-1 driver overtaking me does not make them careless and me careful — because I am a terrible driver.

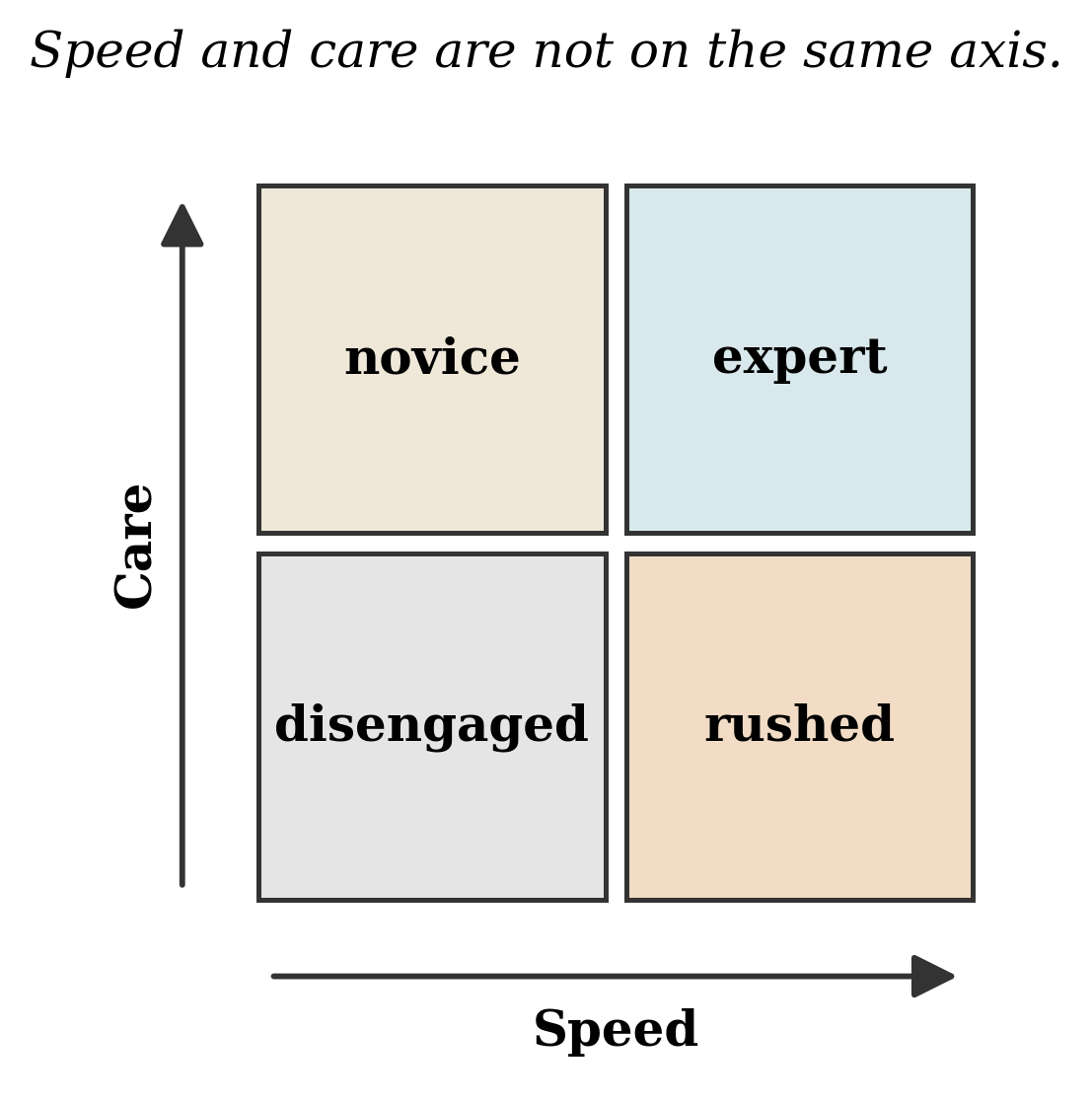

That strawman collapses two axes into one. A junior reviewer can take eight hours to produce a confused review; a senior can take ninety minutes and write something sharp. Within one person at fixed expertise, relative speed and care correlate. Across people, and across tooling regimes, they do not.

Speed and care are different axes. Within one person at fixed expertise they correlate; across people, or across tooling regimes, they do not.

The concession lives inside that “within” clause: same person, same expertise, same tools — faster can mean worse. You skip the ablations. You do not seek feedback. You do not double-check the references. But “all else equal” is exactly what the retort assumes away. The interesting question is not how long someone took. It is whether they earned the speed or borrowed it.

In Andrew Huberman’s phrasing: as fast as I carefully can. Being careful is what makes earned speed legible and delegated speed accountable.

Two projects, two decisions

I had two recent projects, both leaning heavily on AI assistance. Both moved fast — but there the similarities end. We pulled the pin on the first and shipped the second.

The first started with theory we had been sitting with for months. Two weeks before the deadline, we decided to take a shot. For the experiments, I was heavily using Claude Code to create them from scratch, and the results were promising. The problem was we had no clue what was actually happening under the hood, or whether the core codebase reflected the theoretical setup. We ended up with more questions than answers. So we pulled the pin.

The second was different. Most of the coding was boilerplate, nothing novel; what mattered was that the experimental signal had to be real. We ran the evals, sanity checks, unit tests, and manual spot-checks. As far as we could verify, the results held up under pressure. So we shipped.

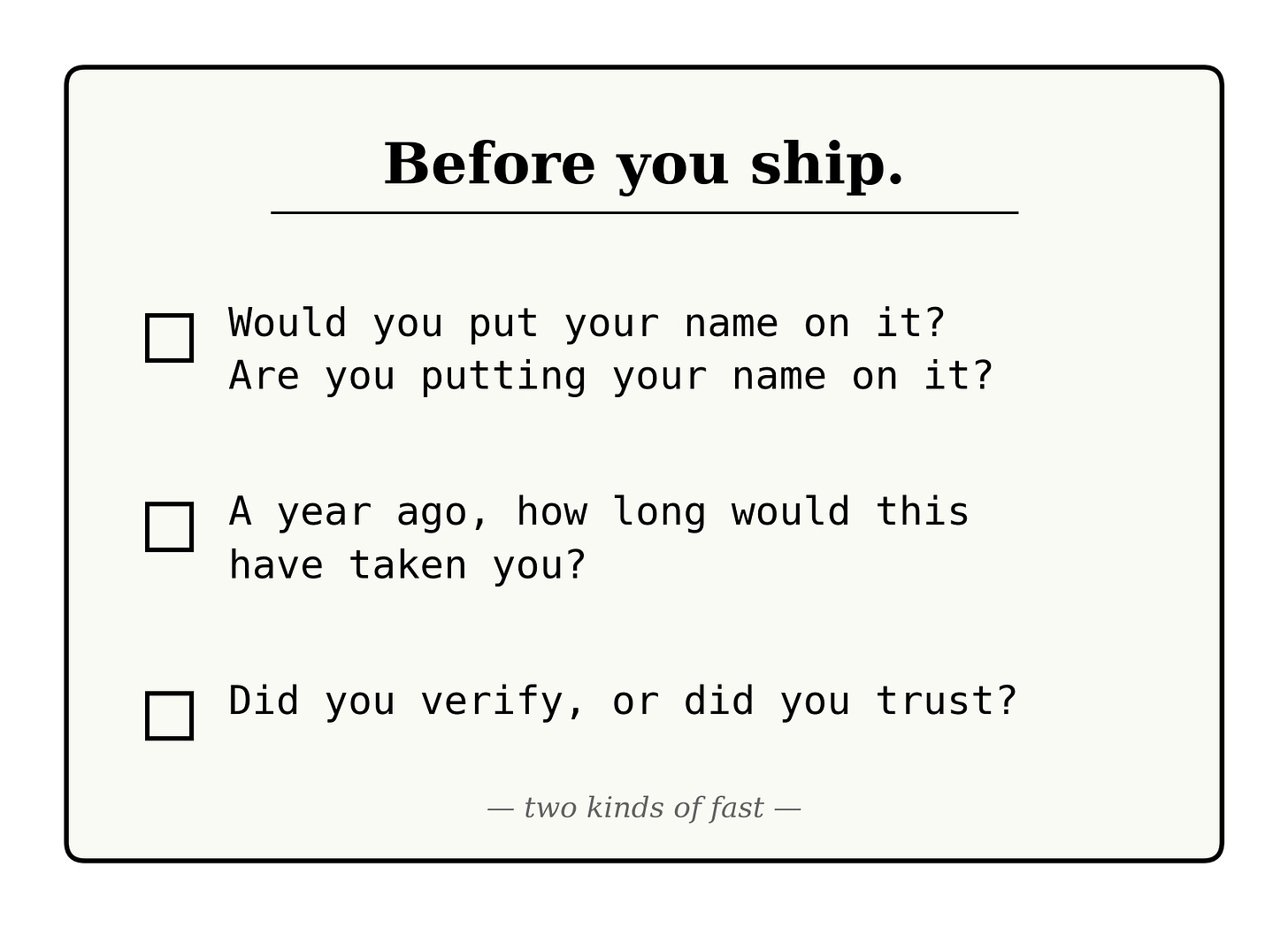

In both cases, the speed was AI-assisted. In one, we could trust the verification; in the other, we couldn’t, and that made the difference. I distilled how we decided into the audit below.

A self-audit: which kind of speed do you have?

Earned and delegated speed look identical from the outside, at least at first scrutiny. Only you can tell which one you have. The question that brings the most clarity to me is:

Would you put your name on it? Are you putting your name on it?

This is by far the most important question, because what you are potentially trading away is your reputation. It’s fine to make mistakes, but it’s not fine to be careless.

The checklist

A year ago, on a task of similar difficulty, how long did it take you? Have you sped up because you understand more, or because you outsource more?

Can you defend every methodological choice — the framing, the baselines, the citations — without saying “the model suggested it”?

If the output is subtly wrong in a way that won’t crash anything, will you catch it on first read? Second? Ever?

Did you verify, or did you trust?

Is the thinking clear, or only the conclusions?

Could the same prose have been generated by someone who never ran the experiment? Or does this sound like a Nostradamus prediction — true of anything, evidence of nothing?

Did you learn something from the process? If not, delegated speed might mean you’re digging your own grave.

What “fast” means now

My earlier post called for redefining fast. This is the redefinition: earned speed compresses what you already understand. Delegated speed substitutes for what you don’t.

The retort treats both as the same number. They are not.

Three days can be the time-to-articulation, not the time-to-research — that is the only kind of three-day paper worth boasting about.

Whether we have the verification infrastructure to catch hallucinations at scale is a separate problem — see bibtexupdater and hallmark for my first attempts, and my P-AGI position paper for the broader argument.

If the problem isn’t actually the same as the one you’ve seen before, working from trained intuition can mislead you.